Wiki Education announced its 2025 findings regarding generative AI usage among new Wikipedia contributors, sharing insights intended to inform policy discussions across the Wikimedia movement. The organization, responsible for roughly nineteen percent of new active editors on English Wikipedia, conducted extensive testing using the Pangram AI detection tool on articles created through its programs since late 2022.

The fundamental conclusion reached by Wiki Education is that editors must never copy and paste content directly from large language models such as ChatGPT or Claude into encyclopedia articles. While initial concerns focused on fabricated citations, the investigation uncovered a more insidious problem: content plausibly sourced but factually unsupported by the cited material.

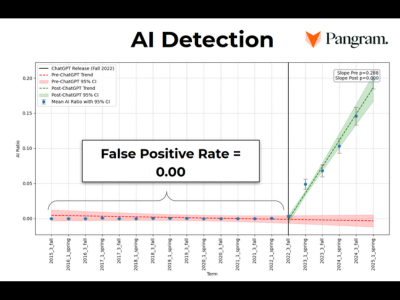

Chief Technology Officer Sage Ross ran over three thousand articles through the Pangram detector, identifying a steady rise in AI-flagged text correlating with the launch of tools like ChatGPT. While only seven percent of these articles contained entirely fake sources, more than two-thirds of the text failed verification, meaning the information claimed in the article did not actually appear in the cited source.

This failure to verify required significant staff time for remediation, as Wiki Education staff corrected or removed flawed contributions to uphold the encyclopedia's integrity. The cleanup efforts included moving recent work to sandboxes, stub-ifying articles, or initiating the Articles for Deletion process for unsalvageable content generated entirely by AI.

In response, Wiki Education developed a new training module explicitly forbidding the copy-pasting of GenAI output and began integrating real-time Pangram detection into their Dashboard course management platform. This proactive monitoring flagged 1,406 AI edit alerts in the latter half of 2025, though only twenty-two percent of those alerts occurred in the main article namespace.

During early exercises, Pangram frequently flagged content in sandboxes, particularly during bibliography creation or outline drafting, where high proportions of non-prose text confused the detector. These false positives highlight the technical challenges in accurately assessing AI-generated content when it is mixed with structured data or formatting elements.

The organization shared these learnings openly, aiming to support Wikipedia editors tasked with content integrity, program leaders onboarding new contributors, and the Wikimedia Foundation’s technology teams. The review underscores that while generative AI presents opportunities, its current output necessitates stringent human oversight for factual accuracy, particularly concerning citation validation.

Looking ahead, the findings compel a reevaluation of onboarding processes across editor training programs globally, emphasizing critical thinking over automated drafting when engaging with AI assistance. The diversity of opinion within the Wikipedia community regarding deletion and AI content suggests that policy evolution around these tools will remain a central focus for the near future.