An independent developer announced the release of a compact, nine-million-parameter Mandarin pronunciation tutor designed for on-device operation, according to a report published on simedw.com.

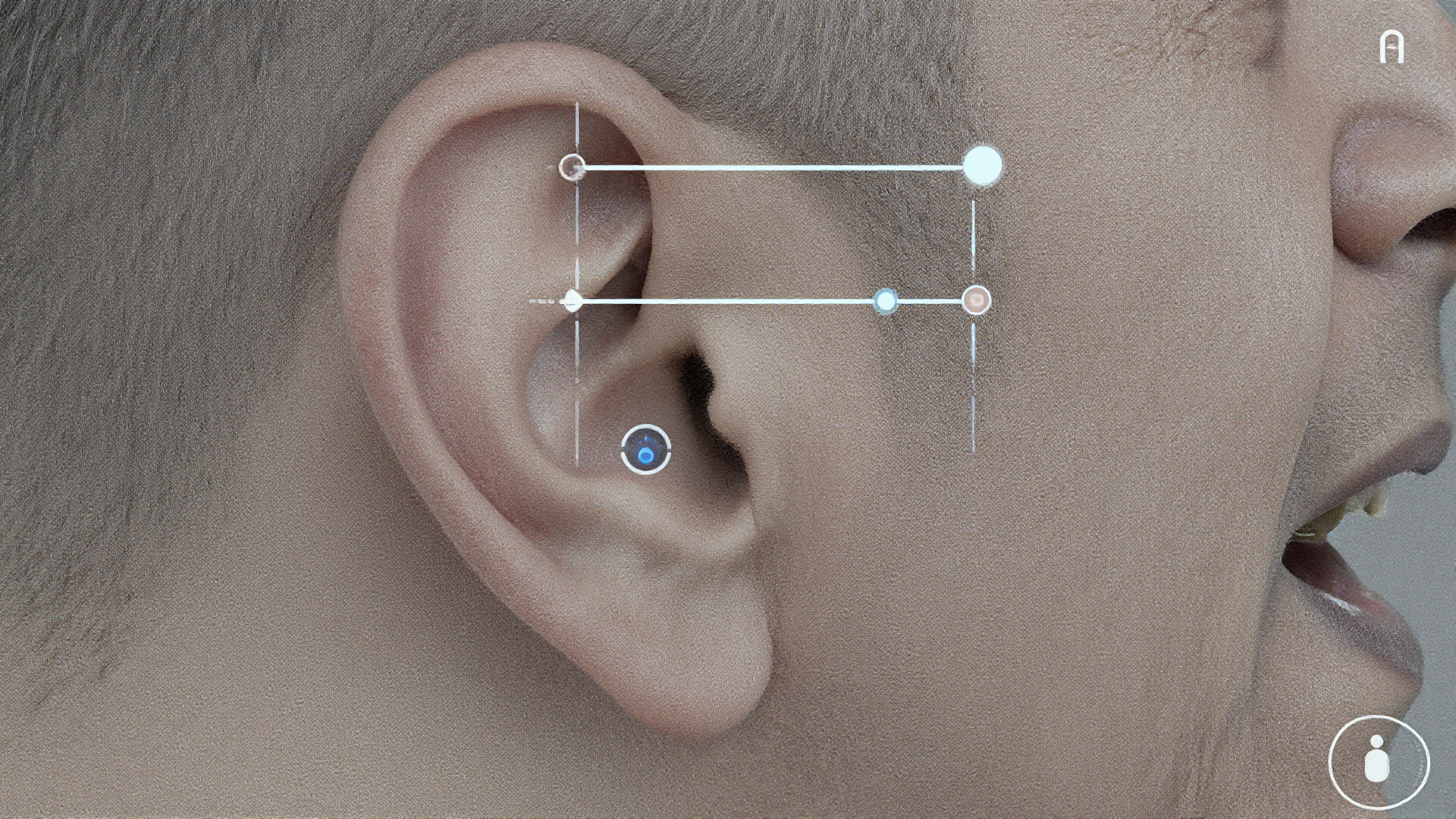

The system was developed after initial attempts to build a brittle, heuristic-based pitch visualization tool proved insufficient for handling real-world audio complexities like noise and coarticulation.

Treating the problem as a specialized Automatic Speech Recognition (ASR) task, the developer opted for a Conformer encoder trained with CTC loss, balancing the need for local spectral feature capture (via convolution) and global contextual understanding (via attention).

Unlike standard sequence-to-sequence ASR models that might correct pronunciation errors to achieve the most likely text output, the CTC framework forces the model to analyze probabilities on a frame-by-frame basis, revealing exactly what was spoken.

The developer established a vocabulary of 1,254 tokens representing Pinyin syllables including tones, avoiding Hanzi representation which obscures phonetic mistakes.

After training on approximately 300 hours of combined AISHELL-1 and Primewords data, the initial 75M-parameter model was aggressively pruned down to the nine-million-parameter version, retaining high accuracy while achieving an 11 MB footprint post-quantization.

Testing revealed a critical alignment bug related to leading silence, which was resolved by decoupling the UI highlighting spans from the scoring frames to prevent blank tokens from dominating confidence metrics.

Early beta testers report the system is strictly effective for improvement, although native speakers noted the model required them to over-enunciate, suggesting future work should incorporate more conversational datasets to address domain shift.